7 Sales Incentive Best Practices for Tech Companies

Introduction

Most sales incentive programs in tech are built backwards. A sales leader identifies a revenue gap, finance approves a budget, and a SPIF or contest gets announced on a Monday morning Slack post. Three weeks later, the numbers haven't moved — or they've moved in ways no one intended: top performers sandbagged deals to hit Q3 accelerators, mid-tier reps gave up after checking the leaderboard once, and the CSM team is quietly resentful that their retention work doesn't qualify for anything.

This isn't a budget problem. It's a design problem — and increasingly, a workforce problem.

When incentive architecture is misaligned with how a sales organization actually functions, the most immediate consequence is behavioral: reps optimize for the reward rather than the outcome the reward was meant to produce. The less visible consequence is organizational: misaligned programs erode program credibility over time, and when credibility erodes, the most mobile members of the sales team — typically top performers with external options — begin recalibrating. In a tech sales environment where a mid-market AE ramp can take six to nine months and an enterprise rep replacement represents a meaningful disruption to pipeline continuity, the workforce cost of persistent incentive misalignment compounds quickly.

Sales incentive program design in tech is more demanding than it looks, because the sales environment itself is more segmented. A company might run SDR teams on activity-based metrics, AEs on bookings with accelerators, CSMs on net revenue retention, and a channel partner program on sell-through — simultaneously, under the same incentive umbrella. Getting the design wrong for any one of those populations has real consequences for pipeline, revenue predictability, and sales team stability.

This article covers seven best practices for designing sales incentive programs that actually change behavior in tech environments — from aligning rewards to specific actions, to building the governance and measurement discipline that keeps a program credible and commercially defensible over time.

Why Tech Sales Incentive Design Is Its Own Discipline

Tech sales organizations operate under conditions that make generic incentive templates unreliable. Deal cycles vary enormously — an SDR might book a meeting in a day while an enterprise AE manages a nine-month evaluation. Product complexity means that selling behavior isn't just about call volume or pipeline coverage; it increasingly involves technical demonstration, stakeholder mapping, and competitive displacement. And the workforce itself is data-literate, skeptical of opaque systems, and accustomed to reassessing the employment relationship when the economics don't hold.

Consider a mid-sized SaaS company running three separate incentive structures simultaneously: an SDR team rewarded on qualified meetings booked, an enterprise AE team on quota attainment with accelerator bands, and a CSM team nominally included in an incentive program but measured on metrics that don't reflect their actual renewal and expansion timeline. Each population has a different performance horizon, a different relationship to the outcomes they influence, and a different signal about whether the incentive design respects how their role functions. When any one of those designs misfires, the consequences aren't only motivational. SDRs who optimize for meeting volume without quality signals produce pipeline that wastes AE capacity. AEs who manage deals to accelerator windows rather than customer timelines introduce forecasting distortion. CSMs who are structurally excluded from incentive eligibility eventually reach their own conclusion about whether their contribution is valued — and act on it.

Sales role segmentation — understanding that SDRs, AEs, CSMs, and channel partners operate on different performance horizons and influence different parts of the revenue cycle — is the foundation on which effective incentive design in tech is built. Without it, you're applying a single motivational logic to a workforce that doesn't work in a single way, and absorbing the downstream costs in attrition, pipeline quality, and forecasting reliability.

Best Practice 1: Align Incentives to Specific Behaviors, Not Just Outcomes

The most common incentive design error is rewarding an outcome — closed revenue, quota attainment, product attach rate — without tracing the specific behaviors that produce it. This matters because outcomes are lagging indicators. By the time a rep misses quota, the behavioral failure that caused it happened weeks or months earlier. An incentive program built entirely on outcome rewards gives sales reps a scorecard but not a map.

Behavior-linked incentive design works differently. It asks: what specific actions, executed consistently, produce the outcome we want — and can we create reward proximity to those actions? In a SaaS context, this might mean building incentive recognition around product demonstration completion rates, multi-stakeholder engagement within an account, or early-stage pipeline qualification accuracy, not just the deal that closes at the end of the quarter.

The principle of reward proximity matters here: the closer in time a reward follows the behavior it's intended to reinforce, the stronger the behavioral signal. A quarterly cash bonus for total bookings tells a rep they did well overall; a near-real-time recognition trigger for completing a competitive displacement call within 48 hours of a prospect's inquiry tells them specifically what to repeat. Both have a role, but the second is more instructive — and more useful earlier in a rep's ramp, when the connection between daily behavior and quarterly outcome is easiest to lose.

The organizational stakes of getting this wrong are highest during ramp periods. A new AE operating under an outcome-only incentive structure for their first two quarters receives no behavioral signal about whether their activity patterns are correct until the outcome data arrives — too late to course-correct without a manager who is actively doing behavioral coaching alongside the incentive system. When manager bandwidth is limited and the incentive architecture doesn't carry the behavioral signal, ramp underperformance compounds. This is one of the more predictable failure modes in enterprise tech sales incentive programs, and it's also one of the more preventable ones.

This doesn't mean eliminating outcome-based rewards. Quota attainment and accelerators remain legitimate structural elements of a sales comp plan. The point is that outcome rewards should be supported by behavioral reinforcement earlier in the sales cycle — particularly for SDRs and mid-funnel roles where the connection between daily activity and quarterly revenue is easy to lose sight of — and that the design question isn't only about what to pay, but when and for what.

Best Practice 2: Design Incentives by Role — SDR, AE, CSM, and Channel Each Need Different Mechanics

A single incentive program that applies uniform mechanics across every sales role isn't equitable — it's indifferent to how those roles actually function. Designing by role means matching the reward structure to the performance horizon, the scope of influence, and the behaviors that matter most for each function. It also means recognizing that when the design is wrong for a role, the attrition signal tends to come from the strongest performers in that role first — the ones with the clearest sense of what their contribution is worth and the most options.

SDRs operate on short cycles with high activity volume. Incentive mechanics for this population should reward consistent, quality-generating behaviors — qualified meetings booked, accurate ICP-fit scoring, multi-touch sequence completion — rather than downstream revenue outcomes they don't control. SPIFs and recognition-based rewards work well here because the feedback loop is fast and the behaviors are visible. Outcome-only incentives for SDRs create misalignment: they generate urgency without direction, pushing reps toward volume rather than quality. The retention risk is specific: SDRs who consistently produce quality pipeline but are evaluated on a metric that doesn't distinguish quality from volume will eventually conclude the system doesn't see what they're doing.

AEs manage longer cycles with more variable complexity. Quota attainment with accelerator bands — where the reward rate increases at 100%, 120%, and 150% of quota — remains the dominant structural mechanic for good reason: it distributes reward proportionally and preserves motivation beyond the quota threshold. The design risk is in the accelerator cliff, where reps slow-play deals at the end of a period to carry bookings into the next period's accelerator range. This introduces forecasting distortion and erodes management trust in pipeline data. Smoothing this through rolling-period attainment or multi-period tracking reduces the incentive to game the timing.

CSMs present a distinct design challenge because their impact on revenue — through retention, expansion, and renewal — is real but indirect and often long-cycle. Excluding CSMs from incentive eligibility sends a clear organizational signal: their revenue contribution doesn't count. In environments where CSM-led expansion and net revenue retention are material to the business's ARR trajectory, treating the CSM team as outside the incentive architecture is both a motivational and a structural misalignment. Effective CSM incentive design focuses on net revenue retention targets, health score improvement milestones, and expansion revenue contribution — with measurement windows that reflect the actual timeline for those outcomes to materialize.

Channel partners operate outside the direct employment relationship, which changes the design constraints entirely. B2B channel incentive programs — also called partner incentive programs or channel loyalty programs — must motivate distributor and reseller behavior without the management levers available for internal sales teams. Sell-through metrics, product certification completion, and co-marketing participation are common behavioral anchors. Reward structures here need to account for the partner's own economics: a margin-focused reseller will respond differently to an incentive than a volume-driven distributor.

Best Practice 3: Use SPIFs With Intention, Not as a Last Resort

A Sales Performance Incentive Fund — SPIF — is a short-term, targeted reward for a specific sales behavior or outcome, layered on top of the standard compensation plan. Used well, SPIFs create behavioral urgency around a discrete objective: accelerating a specific product's attach rate, driving activity in an underperforming territory, or generating pipeline in a new market segment before end of quarter. Used poorly, they become a reflexive response to revenue shortfalls — announced too late, structured too vaguely, and forgotten as soon as the quarter closes.

The behavioral mechanism behind a well-designed SPIF is temporal focus: by creating a defined reward window around a specific action, the program concentrates sales attention in a way that a permanent incentive structure can't replicate. That concentration effect is the SPIF's value, and it depends entirely on how precisely the target behavior is defined.

Three design principles improve SPIF effectiveness in tech environments. First, define the eligible behavior, not just the eligible outcome — "first meeting completed with a net new enterprise account" rather than "new enterprise pipeline added." Second, keep the reward window short enough to maintain urgency: four to six weeks is a reasonable range for most SPIF objectives; longer windows dilute the temporal focus effect. Third, communicate the SPIF before the behavior window opens, not during it — reps need enough lead time to change their activity patterns, and a SPIF announced in the last week of a quarter is more noise than signal.

The governance dimension of SPIF design is often underweighted. Who owns the SPIF calendar? Who approves eligibility criteria, and what process handles disputes when a rep believes a qualifying event was incorrectly excluded? In the absence of documented criteria and a visible decision process, SPIF rulings become a source of perceived favoritism — particularly when the same reps consistently appear in the eligible population and others conclude the mechanics are designed around the top quartile. Documenting eligibility rules, publishing the criteria before the window opens, and establishing a clear exception-handling process addresses this risk without adding meaningful administrative burden.

One additional risk worth naming: SPIF saturation. When short-term incentives run continuously or overlap, the urgency signal degrades. Reps learn to wait for the next SPIF rather than executing consistently. A disciplined SPIF calendar — no more than two to three per quarter, with clear behavioral rationale for each — preserves the mechanism's effectiveness.

Best Practice 4: Balance Cash Rewards With Non-Cash Recognition

The assumption that cash is always the most motivating reward is persistent in sales culture and not entirely wrong — but it's incomplete. Research on reward design suggests that non-cash rewards can produce stronger recall and behavioral association than equivalent cash values, particularly for experiences and tangible awards. The mechanism is partly psychological: cash is fungible and gets absorbed into general income perception, while a distinctive experience or recognition moment carries a separate memory trace. This is a directional finding rather than a settled empirical claim — organizations should treat it as design guidance proportionate to their context, not as a prescription.

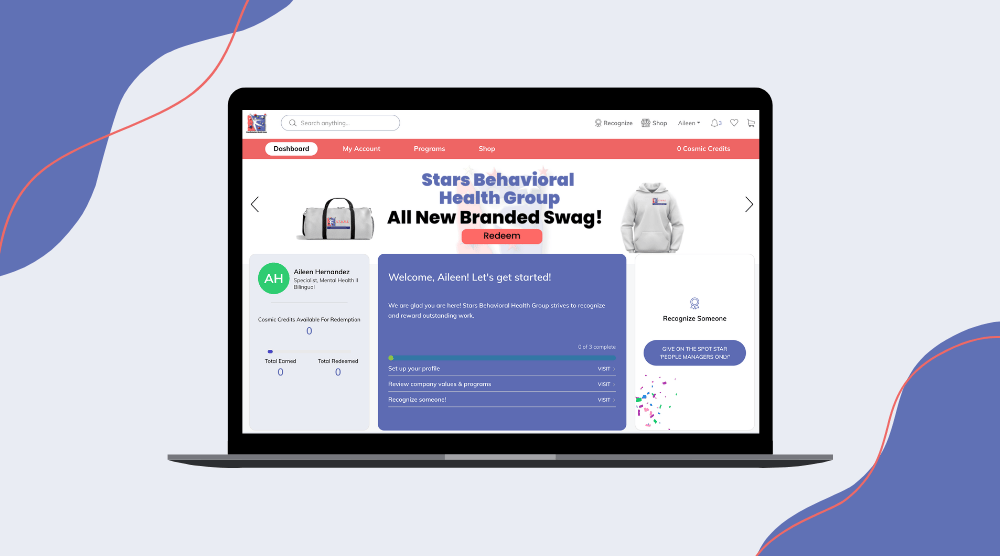

This matters for incentive program design because it expands the reward toolkit available to sales managers and HR practitioners beyond the compensation plan. Non-cash recognition — whether public acknowledgment, experience-based rewards, points-redeemable catalogs, or career development opportunities — addresses motivational needs that cash doesn't reach, including the need for visibility, peer validation, and a sense that individual contribution has been specifically noticed.

The retention implication is most acute for the mid-tier of a sales team. Top performers typically receive visibility through quota attainment and President's Club-style programs. The 60% of a sales team whose cumulative contribution often exceeds the recognized leaders, but who rarely appear on a bookings leaderboard, present a different design challenge. For this population, the question isn't whether their performance meets a threshold — it's whether the incentive architecture sees them at all. An outcome-only incentive architecture that consistently recognizes the same top quartile while the middle tier executes reliably and invisibly is generating a slow-moving retention signal that rarely shows up until a resignation.

For tech sales teams, a few applications are worth considering. Public recognition for behavioral milestones — completing a competitive certification, leading a product demo for a strategic prospect, coaching a junior SDR through their first enterprise call — creates social proof around desirable behaviors and makes the middle tier visible in a way that bookings rankings cannot. Experience rewards tied to President's Club-style programs remain effective for top performers because of the status and exclusivity signal they carry, not primarily because of their cash equivalent value.

The practical design question isn't "cash or non-cash" but "which behaviors warrant which reward type." High-stakes, high-discretion behaviors — closing a competitive displacement deal, retaining an at-risk enterprise account — justify cash-linked outcomes because the financial stakes of the behavior are clear. Consistent, repeatable, culture-building behaviors are often better served by recognition that names the action specifically and makes it visible to peers.

Best Practice 5: Build Transparency and Governance Into the Program

A sales incentive program that produces correct outcomes but is perceived as opaque or arbitrary will lose credibility faster than one with a simpler structure that everyone understands. Transparency in incentive design isn't primarily about fairness signaling — it's a functional requirement for the program to work as intended.

When reps don't understand how rewards are calculated, they can't modulate their behavior to earn them. A comp plan that reps describe as confusing isn't just a communication failure; it's a motivational failure. The incentive-to-behavior linkage breaks when the behavioral pathway to the reward is unclear. This is especially acute in enterprise tech sales, where deal complexity and variable commission treatments — overlay splits, solution selling adjustments, partner co-sell credits — can make attainment calculations genuinely difficult to follow in real time.

Credibility erosion has a workforce consequence worth making explicit: when top performers conclude that the incentive architecture is opaque or inconsistently applied, they don't usually raise it as an HR issue — they update their external option assessment. Documenting program rules, publishing eligibility criteria in advance, and applying exception-handling consistently isn't only a fairness mechanism; it's a retention mechanism.

Governance mechanisms reduce the two most common sources of program distrust: perceived favoritism in discretionary awards, and inconsistent rule application when deal exceptions arise. Discretionary awards — manager-nominated recognition, spot bonuses, or SPIF eligibility rulings — benefit from documented criteria and a visible decision process, even if the award itself remains manager-driven. Exception handling for split deals, overlapping territories, and late-stage account transitions should be documented before the situation arises. Manager training on consistent application is an underinvested dimension here: even well-designed governance rules underperform when applied unevenly across teams.

Leaderboard mechanics warrant specific attention. Real-time leaderboards serve a dual function: they create competitive visibility for top performers and communicate the performance gap to everyone else. For roughly the top quartile of a sales team, this is motivating. For the middle tier, a leaderboard that persistently shows the same names at the top can function as a demotivating signal — "I'm not winning this, so why focus on it?" Thoughtful governance means deciding when leaderboards are appropriate (time-limited SPIFs with reset potential), what they display (activity metrics, not just revenue outcomes), and whether the competitive dynamic they introduce serves the program's behavioral goals or undermines them.

Best Practice 6: Protect Intrinsic Motivation While Driving Performance

Incentive programs create an extrinsic reward structure around behaviors that, for some sales reps, are already intrinsically motivated — the satisfaction of solving a customer's problem, closing a complex deal, or building a long-term account relationship. When extrinsic rewards are applied without care to these behaviors, they can crowd out the intrinsic motivation that was already there. This is the crowding-out effect, and it's a real design risk in high-complexity, consultative tech sales environments.

The crowding-out effect is most likely to occur when rewards are controlling rather than informational — when the incentive signals "we're paying you to do this because we don't trust you to do it otherwise" rather than "we're acknowledging that this contribution matters." The practical distinction is subtle but designable: an incentive that rewards a specific behavior with an accompanying acknowledgment of why that behavior creates value tends to reinforce the intrinsic narrative; a pure cash trigger with no contextual framing tends to reduce the behavior to a transaction.

The organizational consequence of this dynamic compounds over time. A sales team whose internal frame shifts gradually from "I'm building pipeline because that's what I do" to "I'm building pipeline because I get paid per meeting" becomes more fragile as a unit: more dependent on the extrinsic trigger, less resilient when the trigger is reduced or removed, and less effective at the complex, judgment-intensive selling behaviors that don't respond well to transactional framing. In enterprise tech environments where competitive displacement, multi-stakeholder influence, and long-cycle relationship development are the core activities that drive revenue, this fragility is commercially significant.

A practical safeguard is to complement cash and points-based incentives with recognition that articulates the value of the behavior, not just the fact that it happened. "You closed a three-year expansion deal on an account that was at churn risk six months ago — here's what that means for the business" is more durable than a payout alone. It reinforces both the extrinsic outcome and the intrinsic story. This framing doesn't require a formal recognition program; it requires managers who are equipped to have the conversation and a program design that prompts it.

Best Practice 7: Measure What the Incentive Was Designed to Change

Sales incentive programs are hypotheses: if we reward behavior X, we expect outcome Y to improve. Most programs get measured on Y — revenue, quota attainment, pipeline coverage — without tracking whether X actually changed. When results disappoint, the program gets redesigned or the budget gets cut, but the underlying behavioral hypothesis was never tested.

Measuring incentive effectiveness requires defining two layers of metrics before the program launches. The first layer is behavioral: what specific actions should change in frequency, quality, or timing as a result of the incentive? For an SDR SPIF targeting multi-stakeholder outreach, the behavioral metric might be the number of unique contacts engaged per account per week. For an AE accelerator targeting expansion revenue, it might be the rate of expansion conversations initiated in accounts above a health score threshold. These metrics should be measurable with existing CRM data — if they require new data infrastructure, the program design may be outrunning measurement capability.

The second layer is outcome-linked: did the behavioral change produce the revenue or retention outcome the incentive was intended to drive? A SPIF that drove a 40% increase in first meetings booked looks successful until you check whether those meetings converted to pipeline at the same rate as non-SPIF-driven meetings. If the conversion rate dropped, the SPIF may have incentivized activity volume at the expense of activity quality — a common distortion in programs that reward inputs without quality controls.

The measurement discipline also generates the leading indicators that allow sales leadership and HR to identify program drift before it becomes a workforce signal. Declining behavioral metric performance in the middle of a program cycle — not declining quota attainment, but declining engagement with the incentivized behaviors — is an early warning that something in the design or execution is breaking down. Monthly behavioral metric reviews during active SPIF windows give program owners the opportunity to course-correct before a poorly designed mechanic produces durable distortion.

Cadence matters. Monthly check-ins during active SPIF windows and quarterly program reviews are a minimum. The review should be a structured conversation between sales leadership, HR, and Sales Ops — not an after-action report that nobody acts on — and the output should be a documented decision: maintain, adjust, or retire the current design. Presenting behavioral and outcome metrics together in that conversation, with a clear line between what changed and what the change produced, is what makes the program a managed investment rather than an annual spend.

Quick Takeaways

- Design incentives around specific behaviors, not just outcomes. Outcome-only rewards give sales reps a scorecard without a map — behavior-linked incentives create a clearer connection between daily actions and program eligibility, and are especially critical during ramp periods when behavioral feedback is most needed.

- Segment incentive mechanics by role. SDRs, AEs, CSMs, and channel partners operate on different performance horizons. A single incentive structure applied across all four will misalign for at least three of them — and the attrition signal from misalignment tends to come from the strongest performers first.

- SPIFs work through temporal focus — preserve that mechanism. Short, precisely defined reward windows around specific behaviors drive concentration. Vague objectives, long windows, SPIF saturation, and absent governance degrade the effect.

- Cash is not the only motivational lever. Non-cash recognition and experience rewards address visibility, peer validation, and behavioral memory in ways that cash payments don't reliably reach — and are particularly important for mid-tier performers whose contribution an outcome-only architecture consistently fails to see.

- Transparency is a retention mechanism, not just a fairness signal. When reps can't follow how rewards are calculated, the incentive-to-behavior linkage breaks — and credibility failures tend to accelerate attrition among the most mobile members of the team. Document criteria, handle exceptions in advance, and apply rules consistently.

- Protect intrinsic motivation. Excessive extrinsic reward triggers — especially automated cash payments without contextual framing — can crowd out the internal motivation that drives high-complexity consultative selling. The organizational consequence compounds over time.

- Measure the behavior change, not just the revenue outcome. Define behavioral metrics before launch, track conversion quality alongside volume, and conduct structured monthly reviews during active SPIF windows. Behavioral metric trends are the leading indicator; revenue outcomes are the lagging confirmation.

Conclusion

Sales incentive programs in tech are often treated as a compensation design problem — a question of how much to pay and when. The seven best practices here argue for a different framing: incentive design is a behavioral and workforce design problem. What specific actions need to change? Who needs to change them, in which role, on what timeline? What reward type best reinforces the behavior without crowding out what's already working? What governance ensures the program remains credible when complexity and edge cases emerge? And what does the program communicate to the mid-tier of the sales organization — the performers who don't appear on leaderboards but whose cumulative contribution is real?

Getting this right isn't a one-time design exercise. Effective incentive programs are actively managed — reviewed against behavioral metrics, adjusted when distortion signals appear, and rebuilt when the underlying sales motion changes significantly. In tech specifically, where product lines evolve, sales motions shift from transactional to enterprise and back, and the workforce recalibrates its expectations continuously, a program designed eighteen months ago may be answering a question the business is no longer asking.

For HR Directors, Chief People Officers, and VPs of Sales who own the incentive architecture, the accountability question is not only whether the program is paying out correctly — it's whether the program is doing the behavioral work it was designed to do, and whether it is communicating to the sales team that the organization understands how their work actually functions. The most durable sales incentive programs are the ones that sales teams understand, trust, and feel are designed for the way they actually work. That trust is built through behavioral precision, structural transparency, and a measurement discipline that treats the program as a hypothesis worth testing rather than an investment worth defending.

Frequently Asked Questions

-

Effective SaaS incentive programs align reward mechanics to role-specific performance horizons — activity-based for SDRs, attainment-based for AEs, retention-and-expansion-based for CSMs. Behavioral precision matters more than reward size: a clearly defined behavior tied to a proximate reward outperforms a large bonus tied to a lagging outcome metric. Transparency and consistent governance are non-negotiable for maintaining program credibility — and for retaining the sales talent whose performance the program is designed to sustain.

-

SDRs benefit from short-cycle, activity-linked rewards that reinforce pipeline quality behaviors. AEs respond to quota attainment structures with accelerator bands that sustain motivation past threshold. CSMs need incentives tied to net revenue retention and expansion metrics, with measurement windows reflecting actual renewal timelines. Channel partners require sell-through and engagement mechanics designed around the partner's own economic incentives, not internal sales logic.

-

Measure two layers: behavioral metrics (did the targeted actions change in frequency or quality?) and outcome metrics (did those behavioral changes produce the intended revenue or retention result?). Behavioral metrics should be defined before the program launches, not after results disappoint. Conversion rate from incentivized activity to qualified pipeline is a critical quality control metric for any SPIF or activity-based reward. Declining behavioral engagement during a program cycle is an early warning signal — more actionable than waiting for quota attainment data.

-

Define the eligible behavior specifically — "first meeting completed with net new enterprise account" rather than "new pipeline added." Keep the reward window to four to six weeks to maintain temporal focus. Communicate before the window opens so reps have time to change their activity patterns. Document eligibility criteria and exception-handling rules before launch — not after disputes arise. Limit SPIFs to two to three per quarter to prevent saturation.

-

Document reward criteria and exception-handling rules before the program launches. For discretionary awards, a visible decision process reduces perceived arbitrariness even when the final call remains manager-driven. Train managers on consistent rule application — governance documents underperform when applied unevenly across teams. Apply leaderboard mechanics selectively: they motivate top-quartile performers but can demotivate the middle tier when competitive gaps are persistent and visible. Consistent rule application is the primary driver of program trust — and program trust is a meaningful dimension of sales talent retention.